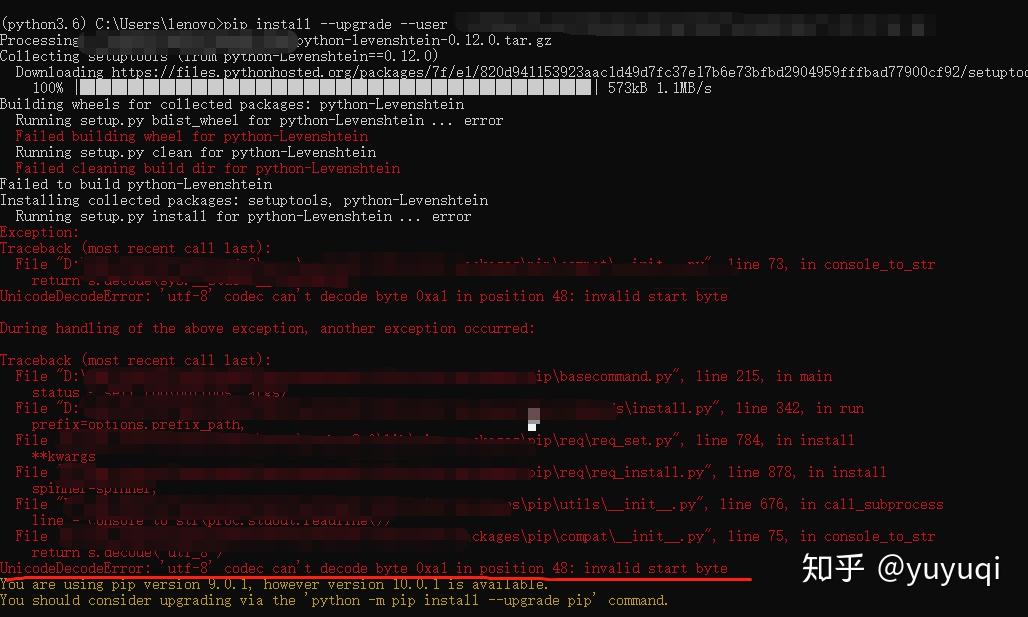

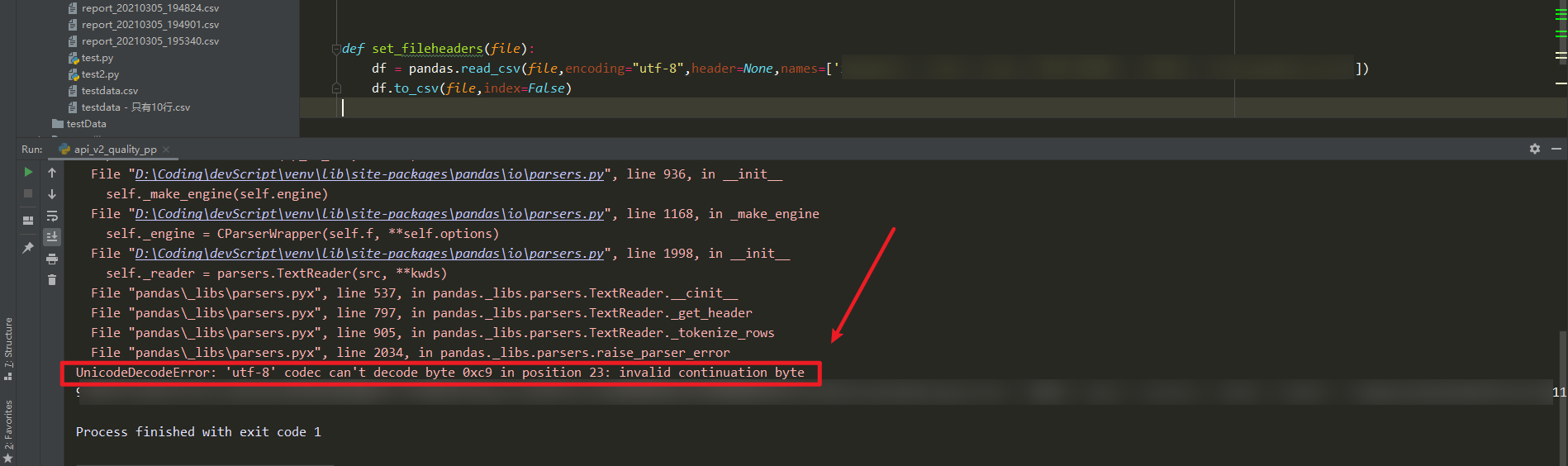

This is a more general script approach for the stated question. Input_fd = open(input_file_and_path, encoding=file_encoding, errors = 'backslashreplace') Typical errors parameter to use here are 'ignore' which just suppresses the offending bytes or (IMHO better) 'backslashreplace' which replaces the offending bytes by their Python’s backslashed escape sequence: file_encoding = 'utf8' # set file_encoding to the file encoding (utf8, latin1, etc.) Pandas has no provision for a special error processing, but Python open function has (assuming Python3), and read_csv accepts a file like object. A real world example is an UTF8 file that has been edited with a non utf8 editor and which contains some lines with a different encoding. You know that most of the file is written with a specific encoding, but it also contains encoding errors. Ok, you only have to use Latin1 encoding because it accept any possible byte as input (and convert it to the unicode character of same code): pd.read_csv(input_file_and_path. You do not want to be bothered with encoding questions, and only want that damn file to load, no matter if some text fields contain garbage. Great: you have just to specify the encoding: file_encoding = 'cp1252' # set file_encoding to the file encoding (utf8, latin1, etc.) You know the encoding, and there is no encoding error in the file. So there is no one size fits all method but different ways depending on the actual use case. Pandas allows to specify encoding, but does not allow to ignore errors not to automatically replace the offending bytes. What's the best way to correct this to proceed with the import? The source/creation of these files all come from the same place. UnicodeDecodeError: 'utf-8' codec can't decode byte 0xda in position 6: invalid continuation byte File "C:\Importer\src\dfman\importer.py", line 26, in import_chrĭata = pd.read_csv(filepath, names=fields)įile "C:\Python33\lib\site-packages\pandas\io\parsers.py", line 400, in parser_fįile "C:\Python33\lib\site-packages\pandas\io\parsers.py", line 205, in _readįile "C:\Python33\lib\site-packages\pandas\io\parsers.py", line 608, in readįile "C:\Python33\lib\site-packages\pandas\io\parsers.py", line 1028, in readįile "parser.pyx", line 706, in (pandas\parser.c:6745)įile "parser.pyx", line 728, in ._read_low_memory (pandas\parser.c:6964)įile "parser.pyx", line 804, in ._read_rows (pandas\parser.c:7780)įile "parser.pyx", line 890, in ._convert_column_data (pandas\parser.c:8793)įile "parser.pyx", line 950, in ._convert_tokens (pandas\parser.c:9484)įile "parser.pyx", line 1026, in ._convert_with_dtype (pandas\parser.c:10642)įile "parser.pyx", line 1046, in ._string_convert (pandas\parser.c:10853)įile "parser.pyx", line 1278, in pandas.parser._string_box_utf8 (pandas\parser.c:15657) A random number of them are stopping and producing this error. Python 3000 will prohibit encoding of bytes, according to PEP 3137: "encoding always takes a Unicode string and returns a bytes sequence, and decoding always takes a bytes sequence and returns a Unicode string".I'm running a program which is processing 30,000 similar files. UnicodeDecodeError: 'ascii' codec can't decode byte 0xd0 in position 0: ordinal not in range(128)

> "\xd0\x91".encode("utf-8") # Unexpected argument type. > "a".encode("utf-8") # Unexpected argument type. As of Python2.5, this is not implemented.Īlternatively, a TypeError exception could always be thrown on receiving an str argument in encode() functions. However, a more flexible treatment of the unexpected str argument type might first validate the str argument by decoding it, then return it unmodified if the validation was successful. This is because the str result of encode() must be a legal coding-specific sequence. Unlike a similar case with UnicodeEncodeError, such a failure cannot be always avoided. Hence a decoding failure inside an encoder. It also appears that such "up-conversion" makes no assumption of str parameter's coding, choosing a default ascii decoder.

It appears that on seeing an str parameter, the encode() functions "up-convert" it into unicode before converting to their own coding. The cause of it seems to be the coding-specific encode() functions that normally expect a parameter of type unicode. Paradoxically, a UnicodeDecodeError may happen when _encoding_. UnicodeDecodeError: 'utf8' codec can't decode byte 0x81 in position 0: unexpected code byte Since codings map only a limited number of str strings to unicode characters, an illegal sequence of str characters will cause the coding-specific decode() to fail.įile "encodings/utf_8.py", line 16, in decode The UnicodeDecodeError normally happens when decoding an str string from a certain coding.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed